Microsoft 365 Copilot can be a major productivity boost, but it is not something you should roll out with a quick license purchase and a launch email. The organizations that get value from Copilot usually do the unglamorous work first: cleaning up access, tightening governance, choosing the right pilot users, training people on useful prompts, and measuring whether the tool is actually changing how work gets done.

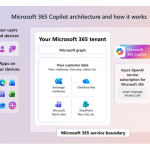

That preparation matters because Copilot works inside the Microsoft 365 environment your employees already use. It can help summarize meetings, draft documents, find information, analyze content, and support daily work across apps such as Teams, Outlook, Word, Excel, and PowerPoint. But it also depends heavily on the quality, permissions, and structure of the data already sitting in SharePoint, OneDrive, Exchange, Teams, and other connected systems.

Microsoft’s own guidance is clear on this point: Copilot uses data that a user already has permission to access, which means strong data governance directly affects the quality and safety of Copilot responses. In plain language, Copilot will not fix a messy Microsoft 365 tenant. It will expose the mess faster.

The good news is that preparing for Copilot is manageable if you treat it as an organizational change program, not just an IT deployment. Below is a practical roadmap your teams can use before, during, and after rollout.

Start with the business case, not the technology

Before anyone talks about licenses, ask a simpler question: what work do we want Copilot to improve?

This sounds obvious, but many AI rollouts begin with excitement instead of focus. Teams hear that Copilot can save time, summarize meetings, write first drafts, search across content, and help with analysis. All of that may be true, but if the organization does not define target use cases, adoption becomes scattered. Some people will use Copilot daily, some will try it once and forget about it, and some will worry that it creates more risk than value.

Start by choosing three to five business scenarios where Copilot could clearly help. For example:

- Sales teams may want faster account research, meeting preparation, and follow-up emails.

- HR teams may want help drafting policy updates, summarizing employee feedback, and preparing internal communications.

- Finance teams may want support reviewing variance explanations, summarizing reports, and preparing management narratives.

- Legal and compliance teams may want better meeting summaries, document review workflows, and controlled research assistance.

- Executives may want briefing documents, decision summaries, and faster access to relevant internal context.

The goal is not to automate every role on day one. The goal is to find repeatable, high-friction work where Copilot can remove small delays that happen every week. A 30-minute saving on one task does not sound dramatic. A 30-minute saving repeated across hundreds of employees, every week, is where the business case starts to show up.

Build your launch around those scenarios. If your first message to employees is “Copilot is now available,” you are asking them to figure out the value by themselves. If your message is “Here are five ways Copilot can help you prepare for customer meetings, summarize project updates, and draft better first versions,” you are giving them a starting point.

Assess your Microsoft 365 technical readiness

Copilot depends on the Microsoft 365 foundation underneath it. If your tenant is outdated, inconsistently managed, or filled with unmanaged sharing practices, the rollout will be harder than it needs to be.

At a minimum, confirm that your users have eligible Microsoft 365 base licenses, Microsoft Entra ID accounts, Microsoft 365 Apps deployed, and the required app and network settings in place. Microsoft also notes that Microsoft 365 Copilot is available as an add-on to eligible licensing plans, and organizations can assign licenses through the Microsoft 365 admin center, partners, or a Microsoft account team.

Do not treat this as a checkbox exercise. Use the technical readiness phase to answer questions like:

- Are users on supported Microsoft 365 Apps update channels?

- Are Teams, OneDrive, SharePoint, Outlook, and Office apps configured consistently?

- Are transcription and meeting recording settings aligned with how you expect Copilot to work in Teams?

- Are privacy settings, connected experiences, and optional connected services configured intentionally?

- Are network endpoints and WebSocket requirements allowed by your security architecture?

- Is device management mature enough to support a broad rollout?

These details affect the employee experience. If Copilot appears for one group but not another, if meeting summaries are unavailable because transcription is disabled, or if users need to restart apps without knowing why, the rollout immediately feels unreliable.

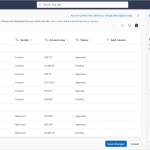

A practical move is to create a readiness dashboard before launch. It does not need to be fancy. A spreadsheet with user groups, license status, app readiness, update channel, Teams settings, OneDrive status, support owner, and known blockers is enough to keep the rollout grounded.

Clean up permissions before Copilot finds the wrong content

This is the part many organizations would rather skip. Unfortunately, it is also one of the most important.

Copilot respects Microsoft 365 permissions. Microsoft states that Copilot presents only data each individual can access using the same underlying access controls used in Microsoft 365 services. That is reassuring, but it also means Copilot may surface content that users technically have access to, even if they should not.

Most companies have some form of oversharing. A SharePoint site created years ago may be open to the entire company. A project folder may have inherited permissions nobody has reviewed. A “temporary” external sharing link may still exist. A sensitive HR or finance document may sit in a place where too many people can see it.

Copilot does not create those permission problems. It makes them easier to notice.

Before launch, identify and fix high-risk areas:

- Sites shared with large groups such as “Everyone except external users”

- Files containing sensitive data in broadly accessible locations

- Ownerless SharePoint sites and Teams

- Old project sites with no active business owner

- Broken permission inheritance at the folder or file level

- Anonymous or overly broad sharing links

- External access that has not been reviewed recently

Microsoft recommends using tools such as Microsoft Purview and SharePoint Advanced Management to identify overshared, ownerless, inactive, or sensitive sites and content before and during Copilot deployment. This is not just a security task. It is also a quality task. If Copilot pulls from outdated, duplicated, or poorly governed content, employees will lose trust in the answers.

Think of this as spring cleaning for your knowledge estate. The goal is not perfection. The goal is to reduce obvious risk before employees start using AI to search and summarize content at scale.

Put governance around AI use from the beginning

Employees need to know what Copilot is for, what it is not for, and where human judgment still matters.

Create a simple Copilot usage policy before broad rollout. Keep it practical. If the policy reads like a legal memo, employees will not use it. The policy should answer everyday questions:

- Can employees paste customer information into Copilot?

- Can Copilot be used to draft external emails?

- What types of content require human review?

- Can employees use Copilot for legal, financial, medical, or compliance-sensitive analysis?

- What should users do if Copilot returns incorrect or inappropriate information?

- Are there rules for using web grounding, agents, plugins, or third-party connectors?

- Who approves new Copilot agents or extensions?

Governance should also include ownership. Microsoft’s adoption guidance recommends forming an AI council that includes an executive sponsor plus representatives from IT, change management, and risk management. That structure is useful because Copilot affects more than one department. IT can manage licenses and settings, but it should not be the only team deciding how AI changes business workflows.

A strong AI council does three things well. First, it sets boundaries so employees feel confident using Copilot without guessing what is allowed. Second, it prioritizes use cases so the rollout stays focused. Third, it reviews feedback and risk signals so the program improves instead of drifting.

Be intentional with license assignment

One common mistake is spreading Copilot licenses thinly across the company. That may feel fair, but it often weakens adoption.

Microsoft recommends assigning seats intentionally, focusing first on areas of the business where users are active in Microsoft 365 and where clear Copilot use cases exist. In practice, that means whole teams often work better than scattered individuals. When several people on the same team have Copilot, they can compare prompts, share wins, help each other troubleshoot, and redesign small workflows together.

A good first wave might include:

- One sales team that lives in Outlook, Teams, PowerPoint, and CRM-adjacent workflows

- One HR or internal communications team that creates and reviews a lot of written material

- One finance or operations team that prepares recurring reports

- One leadership group that needs faster briefing and meeting follow-up

- One IT or support group that can test technical issues before broader deployment

Be transparent about why these groups were chosen. Employees who do not receive licenses may assume they were left out unfairly. Managers may pressure IT to add licenses before the organization is ready. Clear selection criteria reduce noise.

You can say something like: “We are starting with teams that have high Microsoft 365 usage, repeatable knowledge-work scenarios, and managers committed to weekly feedback. We will expand after we validate security, adoption, and business impact.”

That message is not glamorous, but it is honest. It also helps people understand that Copilot rollout is a managed program, not a random perk.

Design the pilot like a learning lab

A Copilot pilot should not be a quiet technical test where a few people receive licenses and everyone waits to see what happens. It should be structured, visible, and feedback-driven.

Microsoft’s setup guidance recommends a phased approach: pilot with a small group, deploy to a larger group, then operate by monitoring usage and adoption and making adjustments. The pilot phase is where you learn what employees actually need, not what the project team assumed they would need.

Give pilot users specific missions. For example:

- Use Copilot in Teams to summarize recurring meetings and identify action items.

- Use Copilot in Word to create first drafts of internal documents.

- Use Copilot in Outlook to summarize long email threads and draft responses.

- Use Copilot in PowerPoint to create a first version of a customer or leadership deck.

- Use Copilot in Excel to explore trends, outliers, and explanations where supported by the user’s environment.

Ask participants to capture what worked, what failed, and where they had to correct Copilot. Do not only ask whether they liked it. Ask better questions:

- Which task did Copilot make faster?

- Where did the output still need heavy editing?

- What prompt worked surprisingly well?

- What answer felt risky, incomplete, or wrong?

- What data could Copilot not find?

- What policy or training question came up?

- Would you keep using Copilot for this task next month?

The answers will shape your broader rollout. They may also reveal issues in your content architecture. If employees keep saying Copilot cannot find the latest policy, the problem may not be Copilot. The problem may be that the latest policy exists in five places and nobody knows which one is official.

Train people on work habits, not just prompts

Prompt training matters, but it is not enough. Employees need to learn how to think with Copilot inside their normal work.

A useful training program should include three layers:

First, teach the basics. Show users how to ask for summaries, drafts, comparisons, rewrites, action items, and explanations. Keep the examples close to their role. A generic “write a marketing plan” prompt will not help a finance analyst. A prompt that says, “Summarize this variance commentary for a CFO audience and flag assumptions that need support” is far more useful.

Second, teach review habits. Copilot can sound confident even when an answer needs verification. Employees should be trained to check facts, confirm sources, review tone, protect sensitive information, and understand when a human expert must approve the final output.

Third, teach workflow redesign. The real value appears when teams change how they work. A manager might use Copilot to summarize a meeting, then ask for risks, then draft follow-up notes, then convert the notes into a project update. That is different from using Copilot as a one-off writing assistant.

Microsoft’s adoption planning checklist emphasizes executive sponsorship, a cross-functional team, champions and early adopters, technical readiness, and a learning community as part of a successful Copilot rollout. The learning community is especially important. People trust examples from colleagues more than examples from a training slide.

Create a prompt library, but do not let it become a dumping ground. Organize it by role and task. Add notes explaining why a prompt works. Include bad examples too, because employees often learn faster when they see the difference between a vague prompt and a useful one.

Prepare managers for the human side of adoption

Copilot will change how work is drafted, reviewed, summarized, and handed off. Managers need to be ready for that shift.

Some employees will be excited and will quickly find ways to save time. Others will be skeptical. Some may worry that using Copilot makes their work less original. Some may fear that AI adoption is a signal that jobs are at risk. If managers are not prepared to discuss those concerns, the rollout can become awkward or uneven.

Give managers a simple talk track:

- Copilot is being introduced to reduce repetitive work and improve access to information.

- Employees are still accountable for the quality and accuracy of their work.

- Copilot outputs should be reviewed before being shared or used for decisions.

- The team will share practical examples and decide together where Copilot fits.

- Feedback is expected, including concerns about quality, risk, or usefulness.

Managers should also model responsible use. If a leader uses Copilot to draft a team update, they can say, “I used Copilot for a first draft, then edited the examples and checked the details.” That kind of transparency helps employees understand the right level of trust. Copilot is not a magic answer machine. It is a very useful assistant when paired with judgment.

Measure value in business terms

Usage is not the same as value. A dashboard may show active users, but that does not prove Copilot is improving work.

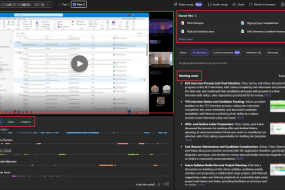

Microsoft’s adoption playbook points to the Microsoft Copilot Dashboard as a way to measure adoption and usage across rollout phases, including active users and app-level usage patterns . That data is useful, but pair it with business measures.

Depending on your goals, you might track:

- Time saved on recurring meeting summaries and follow-ups

- Reduction in time needed to prepare client or leadership briefings

- Faster first drafts for internal communications or policy documents

- Improved completion of action items after meetings

- Lower support volume for “where do I find this?” questions

- Employee satisfaction with knowledge search and document creation

- Number of reusable prompts or team workflows created

- Quality improvements in recurring reports, updates, or presentations

Collect both numbers and stories. The numbers tell you whether adoption is spreading. The stories explain why it matters.

For example, “72 percent of pilot users were active in Copilot last week” is interesting. But “the sales team cut account prep time from two hours to 45 minutes and improved manager review quality” is the kind of result that gets leadership attention.

Secure and monitor Copilot continuously

Copilot readiness does not end at launch. Your tenant, users, content, and business processes will keep changing.

Microsoft recommends monitoring Copilot activity and data security through tools such as Microsoft Purview, including reviewing interactions, web search keywords, sensitive data activity, data risk assessments, and alerts related to insider risk or data loss . This is important because AI governance is not a one-time policy document. It is an operating model.

After rollout, schedule regular reviews:

- Monthly review of adoption, usage, and support tickets

- Monthly review of oversharing and sensitive data exposure risks

- Quarterly review of policies, training, and approved use cases

- Quarterly review of license allocation and expansion candidates

- Ongoing review of new Copilot features, agents, connectors, and admin controls

Pay special attention to agents and connectors. Microsoft allows admins to manage agents, permissions, and whether web data can be used as grounding data through the Copilot Control System in the Microsoft 365 admin center. As Copilot expands beyond core Microsoft 365 content, your governance model needs to expand with it.

The question should not be “Did we launch Copilot?” The better question is “Are we operating Copilot safely, usefully, and consistently?”

Build a 30, 60, and 90 day readiness plan

If your organization is still at the beginning, use a staged plan. It keeps the work realistic and helps leaders see progress.

First 30 days: establish the foundation

In the first month, focus on readiness and alignment. Confirm eligible licensing, identify target business scenarios, name an executive sponsor, form the AI council, and map your first pilot groups. Start a permissions and oversharing review, especially across SharePoint, OneDrive, and Teams.

This is also the time to draft your Copilot usage policy. Keep it short enough that people will actually read it. A one-page practical guide is better than a 20-page document nobody opens.

Days 31 to 60: pilot with structure

In the second month, launch the pilot. Assign licenses to selected groups, run role-based training, publish your first prompt examples, and set up weekly feedback loops. Track usage, collect stories, and document common issues.

Do not rush this stage. If the pilot uncovers overshared content, unclear policies, or weak training, fix those issues before expanding. A slower pilot can prevent a messy enterprise rollout.

Days 61 to 90: expand and operationalize

In the third month, expand to additional teams based on readiness and business value. Refine training based on pilot feedback. Update your prompt library and use case catalog. Review license allocation and remove licenses from users or teams that are not ready to use them.

By day 90, you should have a clear view of where Copilot is working, where it needs more support, and which teams should be next. You should also have a regular governance rhythm in place so Copilot stays aligned with security, compliance, and business goals.

Common mistakes to avoid

Even strong organizations can stumble with Copilot. The most common mistakes are usually predictable.

The first mistake is launching before permissions are reviewed. This creates avoidable anxiety because users may discover content they should never have been able to access in the first place.

The second mistake is treating training as a one-time webinar. Copilot skills improve through practice, examples, and peer learning. A launch session is useful, but it is not an adoption program.

The third mistake is assigning licenses without a plan. Copilot is more valuable when teams learn together around specific workflows.

The fourth mistake is measuring only logins. Usage matters, but business outcomes matter more.

The fifth mistake is assuming governance can wait. Employees will start experimenting quickly. If the rules are unclear, they will make their own.

Avoiding these mistakes does not require a giant transformation office. It requires discipline, ownership, and honest feedback from the people doing the work.

Final thoughts

Preparing for Microsoft 365 Copilot is really about preparing your organization for a new way of working. The technology can help people move faster, but only if the environment around it is ready.

Start with business scenarios. Clean up permissions. Set clear governance. Choose pilot users carefully. Train people with real examples. Measure value in business terms. Keep improving after launch.

If you do that, Copilot becomes more than another tool in the Microsoft 365 suite. It becomes a practical layer of assistance across the work your employees already do every day. That is where the value is.

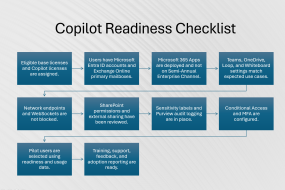

Practical readiness checklist

Use this checklist before broad rollout:

- Confirm eligible Microsoft 365 licenses and Entra ID user accounts.

- Verify Microsoft 365 Apps, Teams, OneDrive, SharePoint, Outlook, and network readiness.

- Review app update channels and device management settings.

- Identify target use cases by department or role.

- Form an AI council with executive, IT, change, risk, and business representation.

- Review SharePoint, OneDrive, Teams, and Exchange permissions.

- Remediate overshared, ownerless, inactive, or sensitive sites.

- Define Copilot usage rules and human review expectations.

- Select pilot teams based on use case fit and readiness.

- Train users with role-specific examples and review habits.

- Create a prompt library organized by task and function.

- Monitor adoption, business outcomes, security alerts, and support needs.

- Review license allocation regularly.

- Update governance as new Copilot features, agents, and connectors are introduced.

Copilot rewards organizations that prepare. The more intentional you are before launch, the more useful, trusted, and secure it becomes after launch.

No Comments