Microsoft 365 Copilot feels powerful because it can work with the information your company already has in Microsoft 365. It can summarize documents, answer questions about meetings, help draft emails, find context from chats, and connect ideas across files.

That raises a fair question: what organizational data does Copilot actually use?

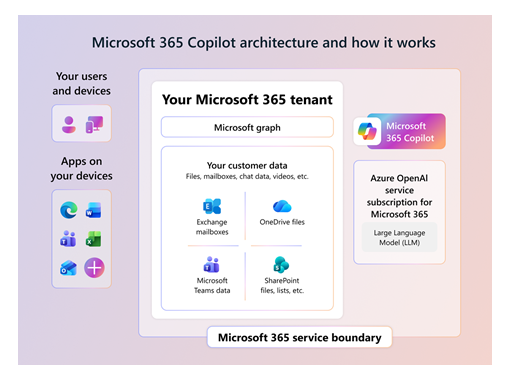

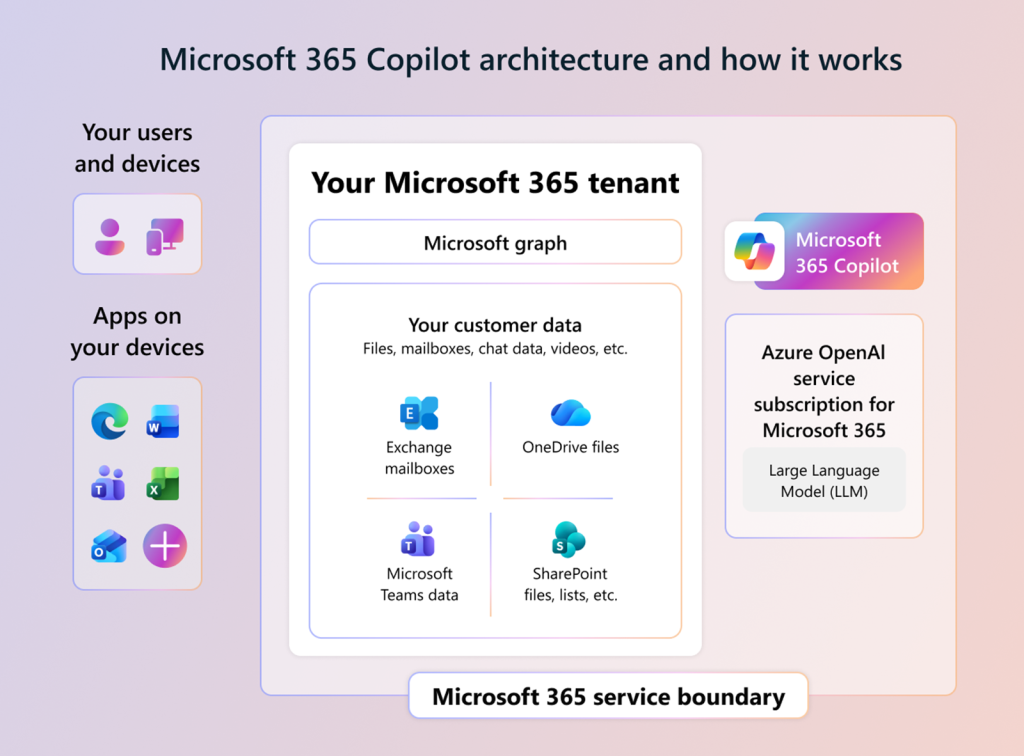

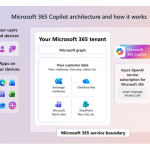

The short answer is that Microsoft 365 Copilot uses data the signed-in user is already allowed to access, and Microsoft states that Copilot does not have tenant-wide visibility. It works through Microsoft Graph, applies grounding to make prompts more relevant, and follows the same Microsoft 365 permissions, security, compliance, and privacy controls that protect your organization’s content.

The simple version

| Question | Short answer |

|---|---|

| Does Copilot use company files, emails, chats, and meetings? | Yes, when that content is relevant and the user has permission to access it . |

| Does Copilot see the entire Microsoft 365 tenant? | No. Access is scoped to the signed-in user’s permissions. |

| Is organizational data used to train foundation AI models? | No. Microsoft says prompts, responses, and Microsoft Graph data are not used to train foundation models. |

| Where does processing happen? | Within the Microsoft 365 service boundary, using Azure OpenAI services rather than public OpenAI services. |

| Do SharePoint permissions still matter? | Yes. SharePoint, OneDrive, Teams, Purview, labels, and access controls directly affect what Copilot can reference. |

Microsoft says Copilot accesses content and context through Microsoft Graph, including documents, emails, calendar items, chats, meetings, and contacts that the user is permitted to access. Microsoft also states that Copilot does not have tenant-wide visibility and that data access is scoped to the signed-in user’s permissions.

How Copilot finds useful context

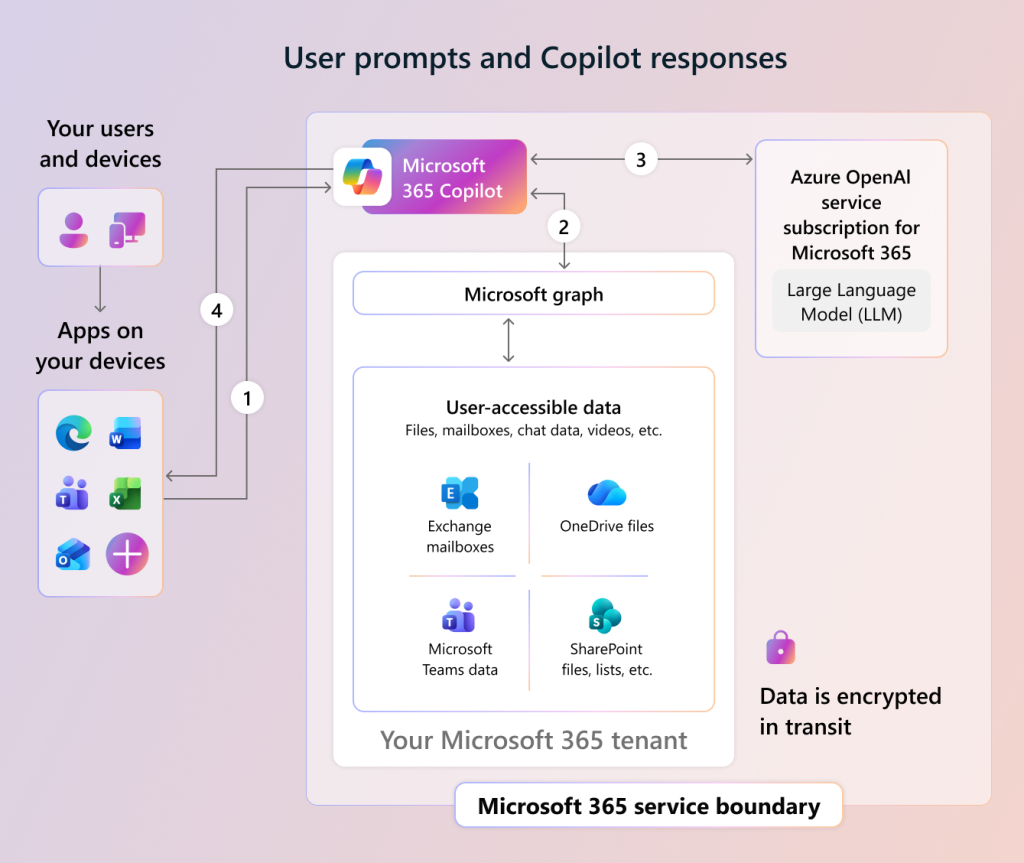

When a user enters a prompt, Copilot does not simply send that raw question to a large language model. First, it uses a process called grounding.

Grounding adds relevant business context to the prompt. That context can include files, emails, chats, calendar items, meetings, and other Microsoft 365 content the user can already access. Microsoft describes grounding as a step that improves prompt specificity and helps Copilot return more relevant and actionable answers.

Here is the basic flow:

- A user asks Copilot a question in a Microsoft 365 app.

- Copilot grounds the prompt using Microsoft Graph and the user’s Microsoft 365 context.

- The grounded prompt is sent to the language model for processing.

- Copilot returns an answer in the app the user is working in.

Microsoft says the data used to generate responses is encrypted in transit, and customer data stays within the Microsoft 365 service boundary.

Copilot respects existing permissions

This is the most important point for business and IT leaders: Copilot does not create new permissions.

If a user cannot open a restricted SharePoint file, Copilot should not use that file to answer the user’s prompt because Microsoft says Copilot only accesses data the user is authorized to access. If a user has access to a Teams chat, a shared document, or a meeting transcript, Copilot may use that content when it is relevant to the request.

Microsoft says Copilot only surfaces organizational data for which individual users have at least view permissions. Microsoft also says Copilot uses Microsoft Graph to access user data in the user’s unique context, such as emails, chats, and documents the user has permission to access.

This is why oversharing matters. Copilot may make existing access problems easier to notice. If a SharePoint site is open to too many people, Copilot may be able to use that site’s content for those users because the permission already exists.

What types of organizational data can Copilot use?

Copilot can use different Microsoft 365 data depending on the user’s license, app, admin settings, and permissions.

| Data type | Example use |

|---|---|

| Word, PowerPoint, Excel, and OneNote files | Summarize, rewrite, draft, compare, or create content |

| Outlook email | Draft replies, summarize threads, find context |

| Teams chats and meetings | Summarize discussions, identify action items, catch up on meetings |

| Calendar | Understand meeting context and scheduling details |

| Contacts and people data | Identify people, roles, and collaboration context |

| SharePoint and OneDrive content | Ground answers in documents and shared knowledge |

Microsoft lists user documents, emails, calendar, chats, meetings, and contacts as examples of organizational data Copilot can use through Microsoft Graph.

Your data is not used to train foundation models

One common concern is whether Copilot learns from company data and uses it to improve the AI model for other customers.

Microsoft says prompts, responses, and data accessed through Microsoft Graph are not used to train foundation large language models, including the models used by Microsoft 365 Copilot. Microsoft’s enterprise data protection guidance also states that prompts and responses are not used to train foundation models.

That does not mean no interaction data is stored. Microsoft says Copilot interaction data, such as prompts, responses, and referenced content, can be stored in Microsoft 365 services and managed through Microsoft Purview for auditing, eDiscovery, retention, and compliance investigations.

Security controls still apply

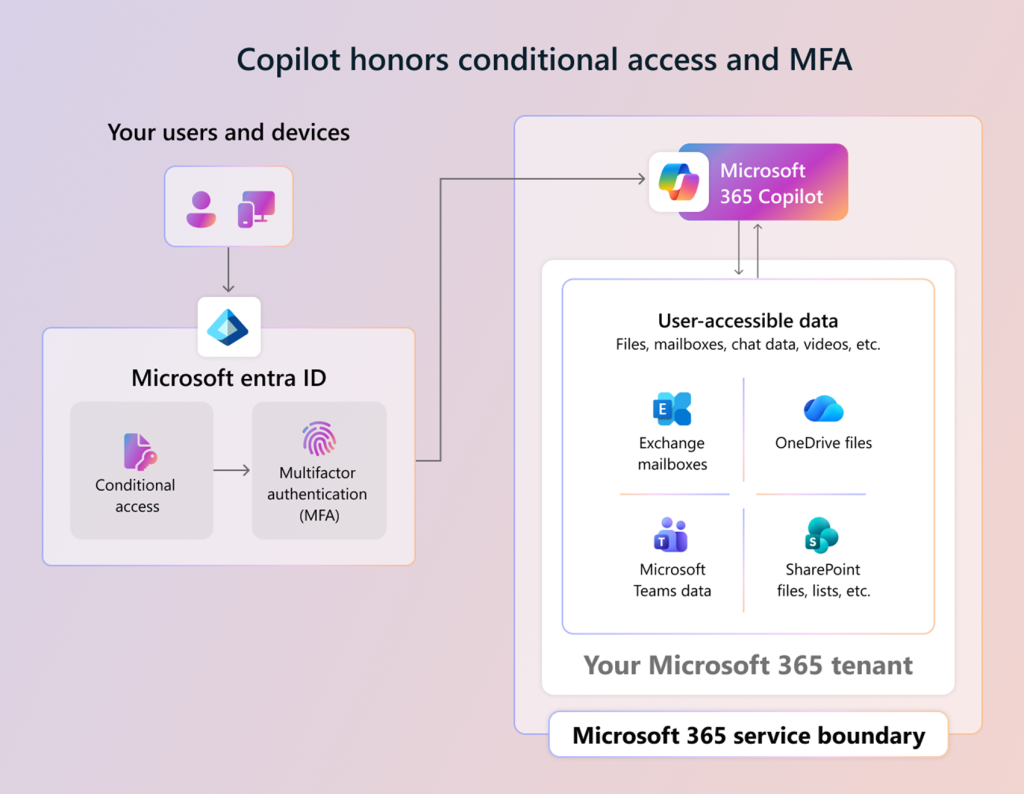

Copilot is not a separate security island. It follows Microsoft 365 controls that your organization already uses.

Important controls include:

- Microsoft Entra ID: Supports identity-based authorization and role-based access control for tenant isolation.

- Conditional Access and MFA: Copilot honors Conditional Access policies and multifactor authentication used in the tenant.

- Microsoft Purview: Supports audit, retention, eDiscovery, sensitivity labels, and compliance controls.

- SharePoint and OneDrive governance: Sharing, membership, search, discovery, lifecycle, and information protection settings affect what Copilot can reference.

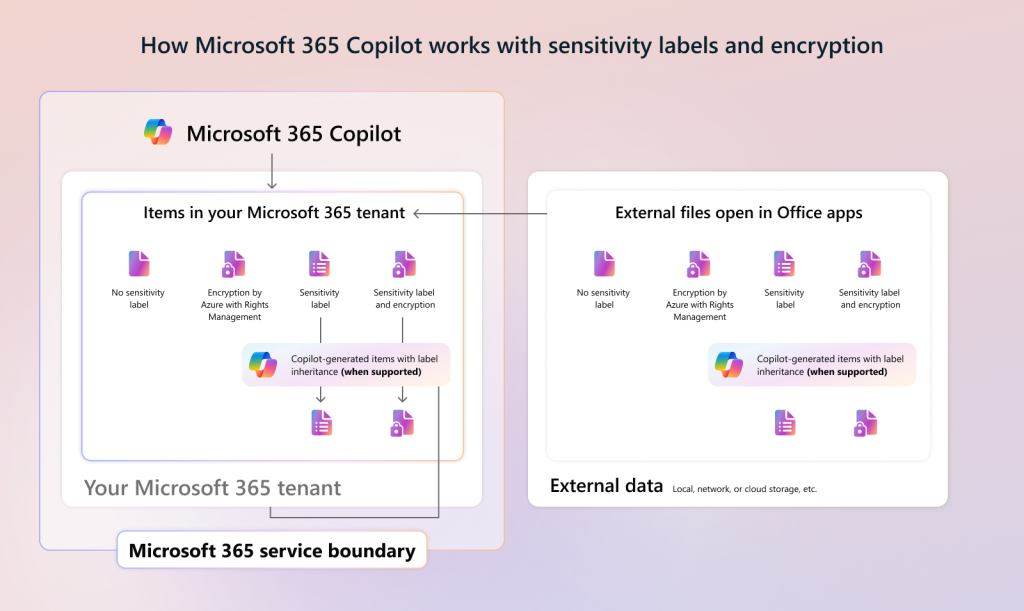

- Sensitivity labels and encryption: Copilot honors protection settings and usage rights for encrypted content.

Microsoft states that Copilot honors Conditional Access policies and MFA, using the same MFA features configured for Microsoft 365 services. Microsoft also says Copilot works with Microsoft Purview sensitivity labels and encryption, and encrypted content requires the user to have the right usage permissions for Copilot to interact with it.

Why SharePoint governance matters

For many organizations, SharePoint is the biggest Copilot readiness issue. The reason is simple: Copilot can help users find and summarize content faster, so poor permissions become more visible.

SharePoint and OneDrive access controls influence what Copilot can discover and reference without changing user permissions. These controls include search and discovery settings, sharing and membership controls, governance and lifecycle policies, and information protection policies tied to sensitivity labels or DLP conditions.

Before rolling out Copilot broadly, admins should review:

- Overshared SharePoint sites

- Anonymous or broad sharing links

- External sharing settings

- Sites without active owners

- Sensitive files without labels

- Old content that should be archived or deleted

This is not just cleanup work. It directly affects the quality and safety of Copilot responses.

What about agents and third-party data?

Copilot can also work with agents and connectors, depending on how your organization configures them.

Microsoft says admins can view the permissions and data access required by an agent in the Microsoft 365 admin center, along with the agent’s terms of use and privacy statement. Microsoft also says admins control which agents are allowed in the organization, and users can only access agents that are allowed and installed or assigned.

This matters because an agent may connect Copilot to data outside the usual Microsoft 365 content set. Admins should review what each agent can access, who can use it, and how its data is handled.

What admins should do before a rollout

Copilot works best when the Microsoft 365 environment is already well managed.

Use this quick admin checklist:

| Area | Admin action |

|---|---|

| Permissions | Review SharePoint, Teams, and OneDrive access before broad rollout |

| Labels | Apply sensitivity labels to confidential and regulated content |

| Sharing | Reduce anonymous links and broad access groups |

| Identity | Enforce MFA and Conditional Access where appropriate |

| Compliance | Configure Purview audit, retention, and eDiscovery policies |

| Agents | Review agent permissions, data access, privacy terms, and availability |

| User training | Teach users to verify AI output before relying on it |

Microsoft says Copilot interactions can be audited, discovered, and retained with Microsoft Purview capabilities, including audit records for prompts, responses, and referenced content.

Microsoft 365 Copilot uses organizational data to make AI responses more useful, but it does not get unlimited access to your tenant. It works through Microsoft Graph, grounds answers in the user’s work context, and follows the permissions and security controls already applied across Microsoft 365.

For users, that means Copilot can help with the files, emails, meetings, and chats they are allowed to use. For admins, it means data governance is not optional. The better your permissions, labels, sharing policies, and compliance controls are, the safer and more useful Copilot becomes.

No Comments